From Latent Knowledge Gathering to Side Information Injection in Discrete Sequential Models

Mehdi Rezaee

Tue. Dec. 5, 2023, 3-5 pm ET, ITE 325B and online

Committee: Drs. Frank Ferraro (Chair), Seung Jun Kim, Tim

Oates, Cynthia Matuszek, and Niranjan Balasubramanian (Stony Brook Univ.)

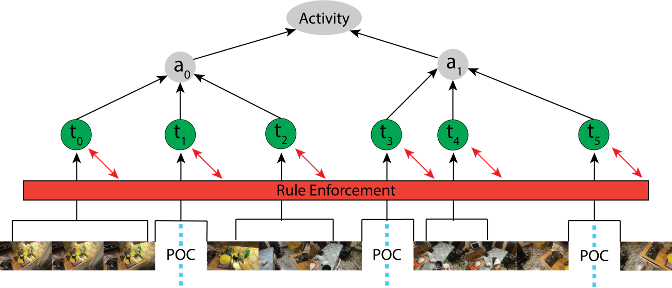

Representation learning aims at extracting relevant information from data to represent input in a way that is sufficient for performing a task. Specifically, this problem is difficult when the data under consideration is both sequential and discrete such as in natural language processing (NLP). From classical methods like topic modeling to modern transformer-based architectures one seeks to utilize the available information from data or transferable knowledge to learn richer representations. To that end, recent advances in current state-of-the-art models rely on two major strategies. a) Latent Knowledge Gathering , where we encourage a model to recognize semantic and thematically-relevant knowledge contained within the training data. Methods include clustering techniques like topic modeling and document classification. b) Injecting Background Information, where the goal is to exploit structural or representational priors, such as pretrained models or word embeddings to facilitate the training phase. Irrespective of the architecture or task, the training process invariably begins with the encoding of high-dimensional documents into more manageable, low-dimensional latent representations. We advocate for these representations to be optimized to capture and utilize more pertinent information, enhancing their efficacy in various language-based tasks. Considering document classification as an example of semantic analysis, both the encoder and decoder are vital in extracting essential information from inputs, especially when dealing with limited training data. Our extensive experiments assess the capabilities of models across various data regimes, highlighting the importance of efficient representation in handling the situation entity classification task. In thematic analysis, despite notable advancements, many previous studies have overlooked the extraction of valuable word-level information, such as latent thematic topics pertinent to each word. Additionally, the use of auxiliary knowledge has often been confined to basic applications like weight initialization. Some methods have simplified the process by merely appending external knowledge to the input. Nonetheless, the effective utilization of information whether derived directly from the data or leveraged from background knowledge remains a critical factor in document representation. It is essential to ensure that the process of information gathering does not compromise the richness of the original data.

First, we offer a novel lightweight unsupervised design that shows how to use topic models in conjunction with recurrent neural networks (RNNs) with minimal word-level information loss. Our approach maintains and uses lower-level representations that previous approaches had discarded, and then it gathers and provides that information to a natural language generation model. We conduct extensive experiments to compare the efficiency of the proposed model with previous proposed architectures. The results demonstrate that retaining and exploiting word topic assignments, previously overlooked, leads to new state-of-the-art performance in thematic analysis.

Second, we consider how background (or side) knowledge can be used to guide model and representation learning of text. This side knowledge can itself be structured, and may often be given categorically. However, the sources of side knowledge can be incomplete, meaning that the side knowledge may be structured, but partially observed. This poses challenges for learning. To handle this, we first focus on incomplete partially observed side knowledge. We propose using a structured, discrete, semi-supervised variational autoencoder framework, which uses provided side knowledge to represent the original input text. This method is intricately designed to use the partially observed knowledge as a guiding tool, without imposing limitations on the training phase. We show that our approach can robustly handle varying levels of side knowledge observation, and leads to consistent performance gains across multiple language modeling and classification metrics.

Ultimately, we delve into scenarios where side knowledge is not just incomplete but also contains noise. In this context, we introduce a universal framework for integrating discrete information, based on the information bottleneck principle. This framework involves a thorough theoretical exploration of how side information can be integrated into model parameters. Our extensive theoretical analysis and empirical studies, including a case study on event modeling, show that our approach not only extends and refines previous methods but also significantly enhances performance. The proposed framework lays a robust theoretical groundwork for future research in this domain.