DeepSeek’s language AI rocked the tech industry, but it comes up short on one measure. Lionel Bonaventure/AFP via Getty Images

Manas Gaur

ChatGPT and other AI chatbots based on large language models are known to occasionally make things up, including scientific and legal citations. It turns out that measuring how accurate an AI model’s citations are is a good way of assessing the model’s reasoning abilities.

An AI model “reasons” by breaking down a query into steps and working through them in order. Think of how you learned to solve math word problems in school.

Ideally, to generate citations an AI model would understand the key concepts in a document, generate a ranked list of relevant papers to cite, and provide convincing reasoning for how each suggested paper supports the corresponding text. It would highlight specific connections between the text and the cited research, clarifying why each source matters.

The question is, can today’s models be trusted to make these connections and provide clear reasoning that justifies their source choices? The answer goes beyond citation accuracy to address how useful and accurate large language models are for any information retrieval purpose.

I’m a computer scientist. My colleagues − researchers from the AI Institute at the University of South Carolina, Ohio State University and University of Maryland Baltimore County − and I have developed the Reasons benchmark to test how well large language models can automatically generate research citations and provide understandable reasoning.

We used the benchmark to compare the performance of two popular AI reasoning models, DeepSeek’s R1 and OpenAI’s o1. Though DeepSeek made headlines with its stunning efficiency and cost-effectiveness, the Chinese upstart has a way to go to match OpenAI’s reasoning performance.

Sentence specific

The accuracy of citations has a lot to do with whether the AI model is reasoning about information at the sentence level rather than paragraph or document level. Paragraph-level and document-level citations can be thought of as throwing a large chunk of information into a large language model and asking it to provide many citations.

In this process, the large language model overgeneralizes and misinterprets individual sentences. The user ends up with citations that explain the whole paragraph or document, not the relatively fine-grained information in the sentence.

Further, reasoning suffers when you ask the large language model to read through an entire document. These models mostly rely on memorizing patterns that they typically are better at finding at the beginning and end of longer texts than in the middle. This makes it difficult for them to fully understand all the important information throughout a long document.

Large language models get confused because paragraphs and documents hold a lot of information, which affects citation generation and the reasoning process. Consequently, reasoning from large language models over paragraphs and documents becomes more like summarizing or paraphrasing.

The Reasons benchmark addresses this weakness by examining large language models’ citation generation and reasoning.

How DeepSeek R1 and OpenAI o1 compare generally on logic problems.Testing citations and reasoning

Following the release of DeepSeek R1 in January 2025, we wanted to examine its accuracy in generating citations and its quality of reasoning and compare it with OpenAI’s o1 model. We created a paragraph that had sentences from different sources, gave the models individual sentences from this paragraph, and asked for citations and reasoning.

To start our test, we developed a small test bed of about 4,100 research articles around four key topics that are related to human brains and computer science: neurons and cognition, human-computer interaction, databases and artificial intelligence. We evaluated the models using two measures: F-1 score, which measures how accurate the provided citation is, and hallucination rate, which measures how sound the model’s reasoning is − that is, how often it produces an inaccurate or misleading response.

Our testing revealed significant performance differences between OpenAI o1 and DeepSeek R1 across different scientific domains. OpenAI’s o1 did well connecting information between different subjects, such as understanding how research on neurons and cognition connects to human-computer interaction and then to concepts in artificial intelligence, while remaining accurate. Its performance metrics consistently outpaced DeepSeek R1’s across all evaluation categories, especially in reducing hallucinations and successfully completing assigned tasks.

OpenAI o1 was better at combining ideas semantically, whereas R1 focused on making sure it generated a response for every attribution task, which in turn increased hallucination during reasoning. OpenAI o1 had a hallucination rate of approximately 35% compared with DeepSeek R1’s rate of nearly 85% in the attribution-based reasoning task.

In terms of accuracy and linguistic competence, OpenAI o1 scored about 0.65 on the F-1 test, which means it was right about 65% of the time when answering questions. It also scored about 0.70 on the BLEU test, which measures how well a language model writes in natural language. These are pretty good scores.

DeepSeek R1 scored lower, with about 0.35 on the F-1 test, meaning it was right about 35% of the time. However, its BLEU score was only about 0.2, which means its writing wasn’t as natural-sounding as OpenAI’s o1. This shows that o1 was better at presenting that information in clear, natural language.

OpenAI holds the advantage

On other benchmarks, DeepSeek R1 performs on par with OpenAI o1 on math, coding and scientific reasoning tasks. But the substantial difference on our benchmark suggests that o1 provides more reliable information, while R1 struggles with factual consistency.

Though we included other models in our comprehensive testing, the performance gap between o1 and R1 specifically highlights the current competitive landscape in AI development, with OpenAI’s offering maintaining a significant advantage in reasoning and knowledge integration capabilities.

These results suggest that OpenAI still has a leg up when it comes to source attribution and reasoning, possibly due to the nature and volume of the data it was trained on. The company recently announced its deep research tool, which can create reports with citations, ask follow-up questions and provide reasoning for the generated response.

The jury is still out on the tool’s value for researchers, but the caveat remains for everyone: Double-check all citations an AI gives you.

Manas Gaur, Assistant Professor of Computer Science and Electrical Engineering, University of Maryland, Baltimore County

This article is republished from The Conversation under a Creative Commons license. Read the original article.

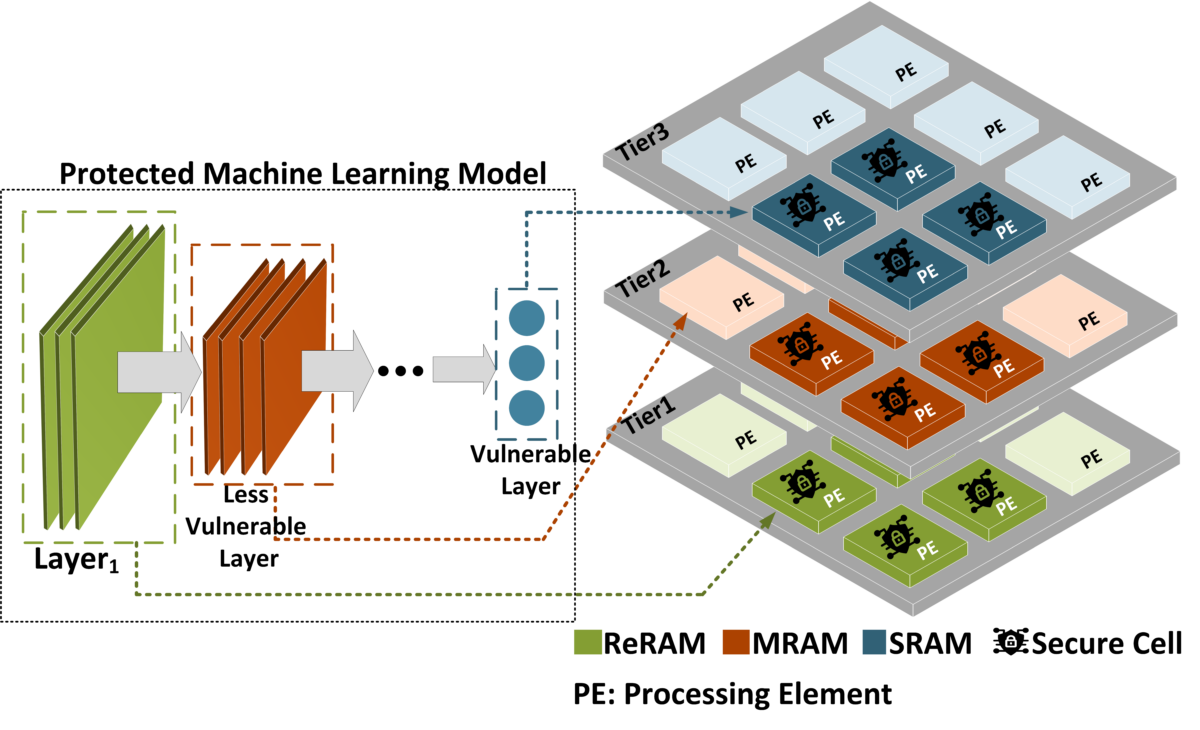

Karimi and her team will study the security of computing-in-memory architectures, as shown in this project overview. (Image courtesy of Karimi)

Karimi and her team will study the security of computing-in-memory architectures, as shown in this project overview. (Image courtesy of Karimi)